We are not target practice

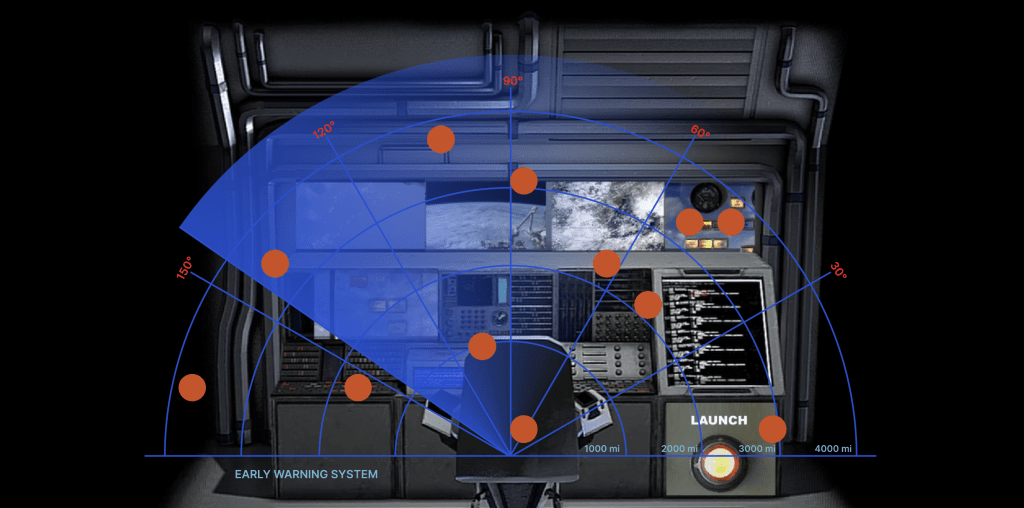

Enter the crushing architecture of an underground bunker, sealed off from events happening above ground, its only connection with the outside world being via flows of data, radar and computer signal processing. A launch button flashes. A dashboard of control panels, blinking lights, satellite imagery lies in reaching distance. The passing of time feels claustrophobic with anticipation and the pressure to act.

My residency started by looking at the 1983 incident that happened within the Serpukhov-15 bunker in Russia. The early warning system claimed with high confidence that it saw an impending nuclear missile attack from the US, which was later found to be merely sunlight reflecting off high-altitude clouds. In the eyes of the computer, the sun and clouds at the Autumn equinox became an act of war.

This example shows the extremities of what can happen when data, surveillance systems and computer vision technologies are assumed to be infallible and are granted an unquestionable accuracy. It destabilises the idea that data always enables us to see more clearly, and highlights the importance of critical perspectives on data gathering practices.

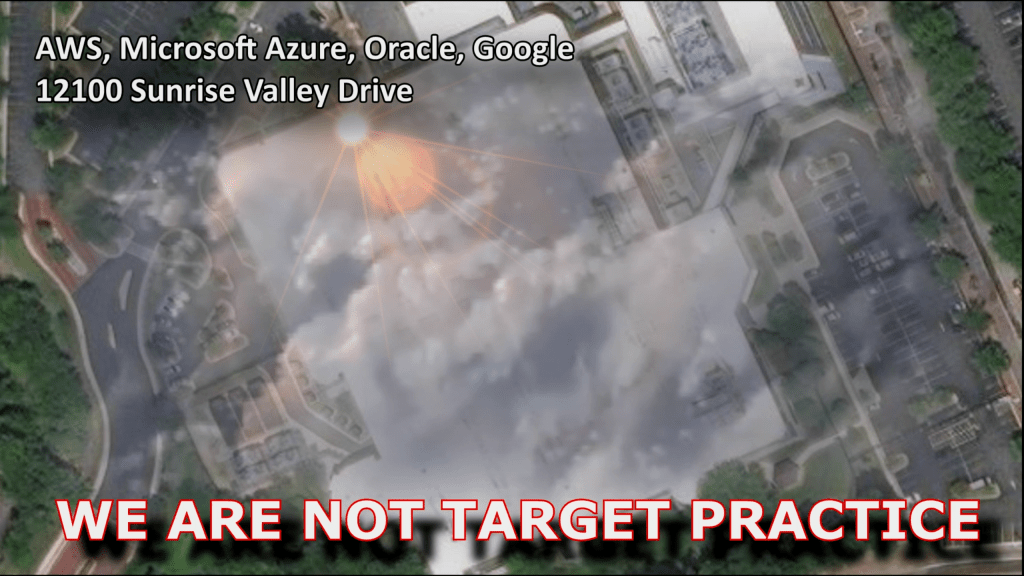

In ‘We are not target practice’ an interface based on radar imagery appears with its uncanny ability to conceal details and subsume targets into a mere dot. In this online bunker, the dots on the radar are locations of data centres that make up the digital cloud infrastructure – shown from satellite perspectives and seen through a cloudy haze. In this instance, there is a focus on Virginia with the largest concentration of data centres in the world. These spaces are owned by Amazon, Microsoft, Google and other tech giants that process our personal data, the data that defines who we are and how we are judged by algorithms that make decisions about and for us.

Computer-perceived threat in 1983 nearly resulted in thermonuclear war and the end of the world as we know it. The stakes may be different in our data-hungry digital age, but an over-eager trust in AI combined with misplaced threat perception through total surveillance could also herald disastrous consequences.

We are more than the sum of our data. We are more than targets to locate and control.

‘We are not target practice’ was made during an online residency with the wonderful support of Vital Capacities / videoclub.

Some of the studio practice

Adversarial.io is a tool created to evade technologies of image recognition and reveal how differently machines and humans interpret images. Subtle noise added to images can completely alter how an algorithm will classify a photo, while in terms of human perception, there is little change to the original image. I found it interesting in the context of my project because of the high confidence with which the M-10 computer declared its identification of the missiles (which turned out to be sunlight reflecting off clouds). In my experiments, I gathered photos of nuclear missiles which, through such added noise, became sewing machines, freight cars, obelisks and totem poles in the eyes of computer vision.

A series of images made by combining maps that show surveillance network Five Eyes locations in the UK and cloud optical thickness.